How I Created A Kids Kindle Book Illustrated by AI

I recreated the Classic kids story "Going on a Bear Hunt" with illustrations completely generated by AI - and now you can too!

There has been a recent boom of AI tools that generate content from various prompts like text and images. Stable Diffusion is a popular tool that was released earlier this year by OpenAI and is accessible to the public! So how does Stable Diffusion work?

Stable Diffusion

Given a noisy image like number 3 or 4 on the right, how easy is it to reconstruct the first image 1? Finding an efficient algorithm that is able to generate the first image is part of how the Stable Diffusion model was trained to generate new images. The diffusion process is the stepwise addition of noise. The Stable Diffusion model is trained to infer how much noise is added to the images - and ultimately reconstruct the original image.

Without going too much into detail (for this high-level intro), the architecture of this model is below:

During the denoising step there are 2 funnels- one funneling down to a smaller size (called the encoder), and the other funneling up (called the decoder). These make up a standard type of architecture called the autoencoder. This basically consists of taking an image and compressing the information in it into a smaller space as efficiently as possible i.e. without losing too much information.

As humans- we are pretty good at this. For example, if I told a 5 year old to look at image 3 above and reconstruct the original through crayons, they might paint the dog ears, snout, and part of the body black, and rest of the body white. They might also choose to color the background white or some other uniform color. This is where the bottle-neck of the funnel or “Latent Representation” comes in.

If you think about it - the same information can also be given by text! I.e. if I tell an artist to draw “A black and white Boston Terrier with a white background” - they would get something similar to the image above.

Once the latent representation is obtained from training via diffusion - all that remains is for some way that any input text can be mapped to the latent space, which is the job of the text encoder. Subsequently this information is passed through the reverse bottleneck decoder - and a new image gets generated. Pretty neat right!

The Stable Diffusion model was trained on 5 billion text-image pairs from the LAION-5B database.

Image Prompts

If you have not hear of Hugging Face- and are interested in making your own AI generated content easily, go check them out ASAP! I used the Hugging Face implementation of Stable Diffusion which is really straightforward. Feed a text input, and get the image output - that’s it!

When I give it the following prompt, these are the results I get (these are random - next time you will get different image results based on the same text input):

However, as you can see here and below when zoomed in, for many cases Stable Diffusion has a hard time generating faces with the necessary granularity. The 2 people in the cave look quite creepy!

Apparently this is a well known limitation due to the lack of such granular training data on faces and limbs in the LAION database.

Putting Images And Words Together

There are a bunch of Google Slides or ppt templates that you can find for various e-books. Save as a PDF and convert to the right format (.MOBI for kindle) and you are almost there.

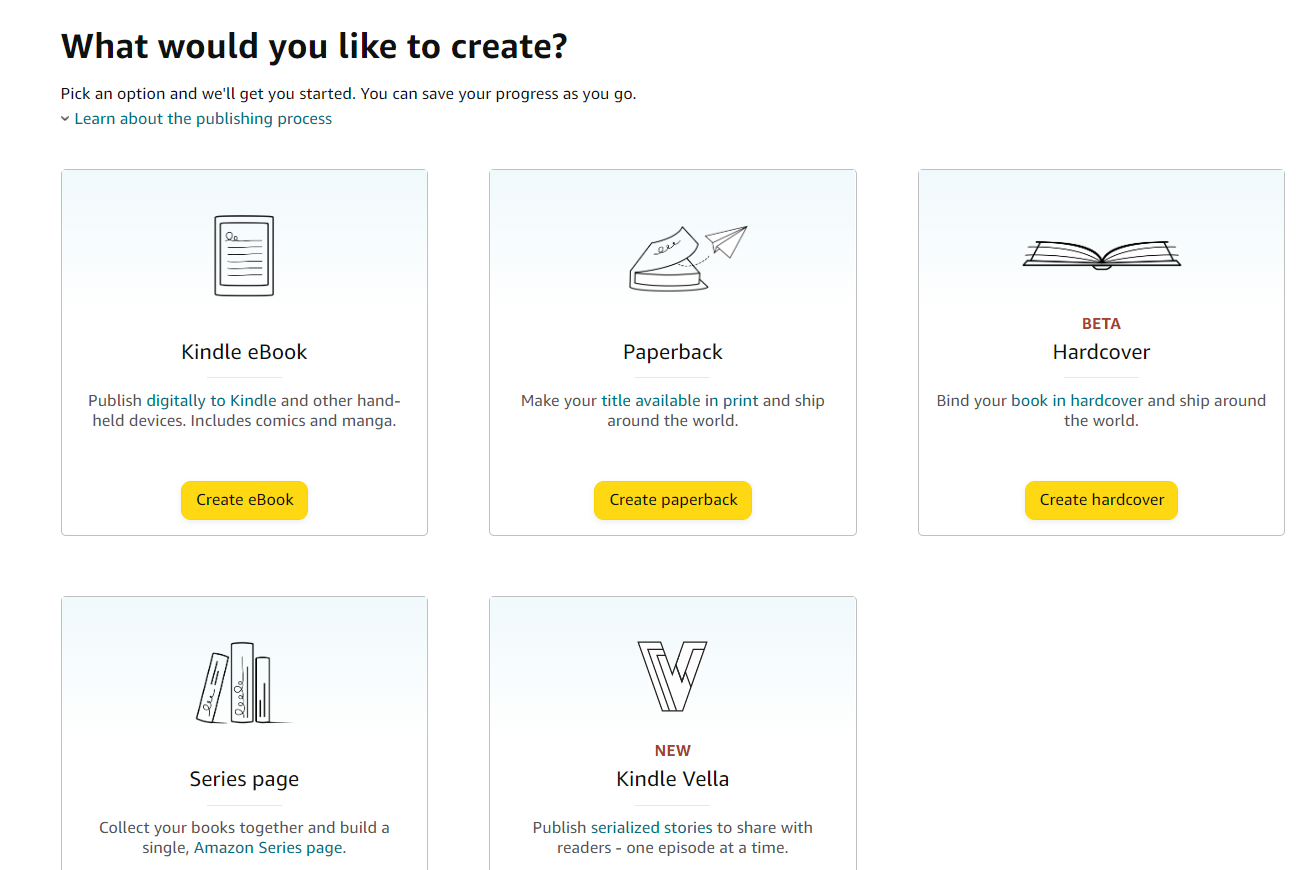

Next, create an account on Kindle Direct Publishing and follow the steps to create an E-Book.

Apart from Stable Diffusion, there have been an explosion of AI models that generate images based on text inputs including DALL-E2, Midjourney, Imagen, etc.

There’s also many other aspects of content creation that can be greatly benefited through AI. People are exploring the application of AI in helping generate fiction stories. I don’t expect these to be replacing writers anytime soon - just helping out creators in handling different aspects such as generating story ideas, illustrations, filler content, etc.

I expect more such AI based models at the intersection of AI, creativity, and content creation. It’s actually a great time to be a content creator as you have a tireless AI assistant!

Here’s the link to my E-Book, now available on Amazon!

Aaand that’s it!

If you enjoyed this post, please share on social media or even just one person you think might enjoy holistic perspectives on the interconnections between technology and modern societies. Feel free to also post any comments in the post discussions on the cyber-physical substack page. This is a small, but growing effort and I hope that I can share in my journey in understanding and building resilient societies.